|

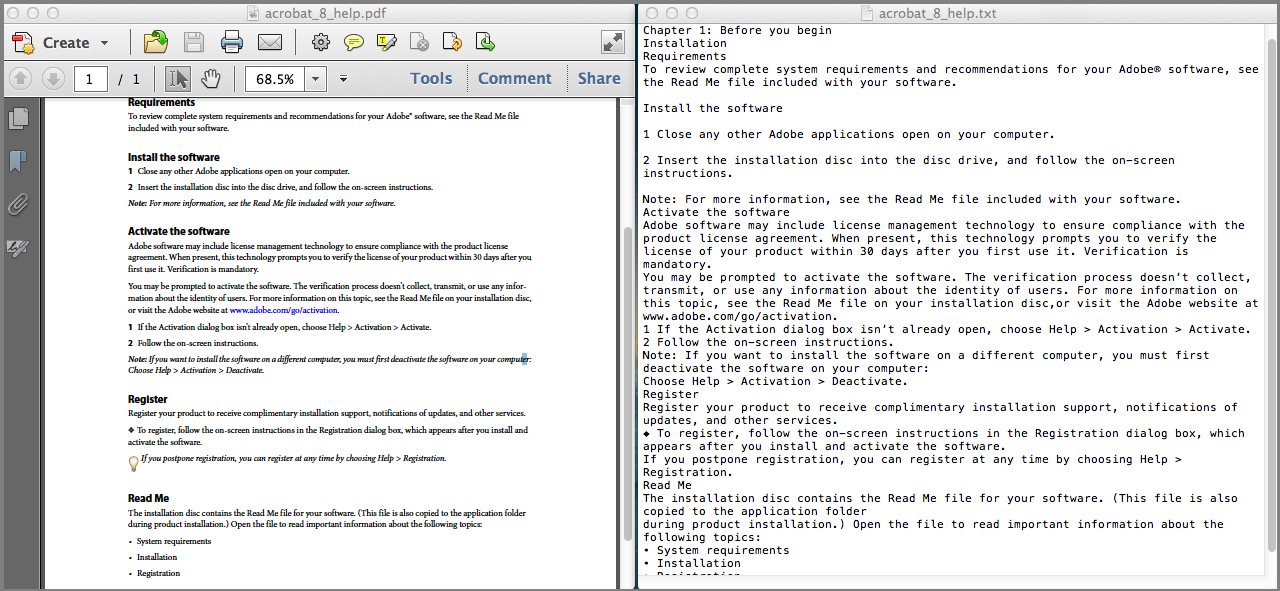

1/5/2024 0 Comments Hadoop convert pdf to text The PdfRecordReader code is written below.

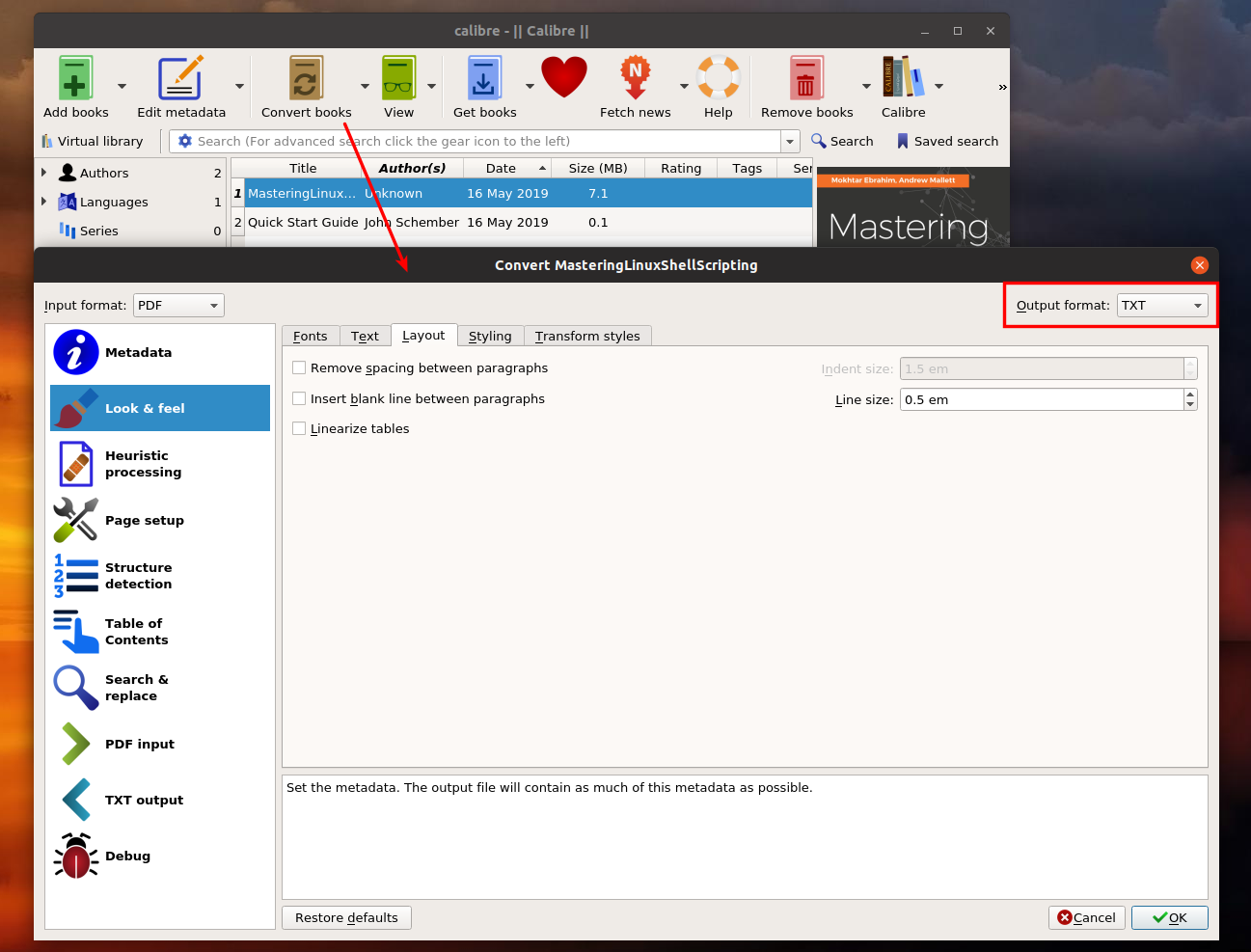

So the logic for checking getting from the array, setting it as key and value, checking for the completion condition etc are written in the code. So I am planning to send line number as the key and each line as value. We need to send this as a key-value pair. Then we splits the text into multiple lines by using ‘/n’ as the splitter and we will store this lines in an array. The output of the pdf parser will be a text which will be stored in a variable. This method will get the input split and we parses the input split using our pdf parser logic. We are applying our pdf parsing logic in this method. Initialize(), nextKeyValue(),getCurrentKey(),getCurrentValue(), getProgress(), close(). This mainly contains five methods which is inherited from the parent RecordReader class. The PdfRecordReader is a custom class created by us extending the RecordReader. InputSplit split, TaskAttemptContext context) throws IOException, Public class PdfInputFormat extends FileInputFormat RecordReader createRecordReader( The code for PdfInputFormat is given below. We are calling our custom PdfRecordReader class from this createRecordReader method. This will contain a method called createRecordReader which it got from the parent class. So in our case, we can also create a PdfinputFormat class extending the FileInputFormat class. If you examine the source code of hadoop, you can see that the default TextInputFormat class is extended from a parent class called FileInputFormat. Second one is we need a similar class like the default LineRecordReader for handling pdf. One is we need a similar class like the default TextInputFormat.class for pdf.

My intention here is to explain about the creation of a custom input format reader for hadoop.įor doing this logic, we need to write or modify two classes. If you want more features, you can modify it accordingly. This is a simple pdf parser which converts the text content of the pdf only. The fundamental is same.Ī simple pdf to text conversion program using java is explained in my previous post PDF to Text. Similarly you can create any custom reader of your choice. I am explain the code for implementing pdf reader logic inside hadoop. Some people might encounter the experience that they cant view a pdf file they want from a terminal when no GUI is available. Here I am explaining about the creation of a custom input format for hadoop. So we need to make hadoop compatible with this various types of input formats. It can be pdf, ppt, pst, image or anything. But in practical scenarios, our input files may not be text files. By modification, I am not meaning about the modification of the architecture or working, but the modification of its functionality and features.īy default hadoop accepts text files. It could be also in important factor if you want to update your documents frequently or you just add to to the index once and then they won't change anymore.In my opinion Hadoop is not a cooked tool or framework with readymade features, but it is an efficient framework which allows a lot of customizations based on our usecases. Without an estimation on the number of PDF files and the average size of a PDF, it would be hard to choose the best design. Upon retrieval, you would convert it back to PDF). with base64 encoding) then store this text value in a stored=true field. In this case, you would have to convert your PDF into text (e.g.

(Note that you could even accomplish the same with older version of Solr, lacking the BinaryField type. You would use a BinaryField type and you would set the stored property to true.

I think it is also possible to store the original PDF file in the Solr index as well. In this case, you would store the HBase id in the Solr index.ģ) store PDF files in the Solr index itself This is an option that is definitely feasible and I have seen several real life implementation of this design. It would also be possible to store the PDF files in a object store, like HBase. What you need to consider here, HDFS is best at storing small number of very large files, so it is not effective to store large number of relatively small PDF files in HDFS. It would be possible to store your individual PDF files in HDFS and have the HDFS path as an additional field, stored in the Solr index.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed